First of all – I must sincerely thank the highly talented Invoke team I worked with to realise the exploratory work described below:

- Charles Bridger Co-Founder

- Akram Darwezeh Lead Software Engineer

- Lloyd McIness Software Engineer

- Craig Vaz Super Intern #1

- Sam McGillicudy Super Intern #2

I am fascinated by the future of spatial computing. Following my studies in mechatronics engineering and commerce, I went on to co-found a startup called Invoke with my final year engineering project partner Charles Bridger.

Building on the proof-of concept project, we developed a device for dedicated object tracking, to supplement user and spatial tracking systems already showcased on mixed reality hardware at the time.

As users, we have learned to interact with virtual information in abstracted ways. The challenge for a creating an intuitive interface for spatial computing is heavily dependent on the performance and reliability of the tracking systems. More recent reactions to the Apple Vision Pro’s eye tracking interface shows how natural interactions can overcome more cumbersome solutions.

Tracker

More about the Invoke Tracker

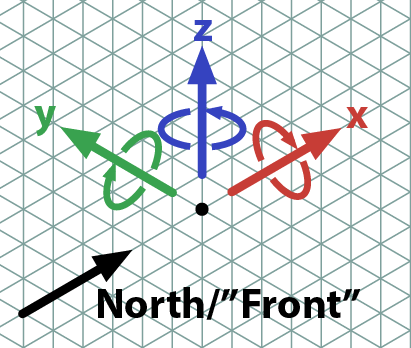

The purpose of the Tracker is to allow a target physical object to become interactive in a mixed reality environment. The Tracker was designed to be mounted to the target object, and would send realtime 6DOF (X, Y Z position and yaw, pitch, roll rotation) data for anchoring virtual objects or interfaces.

For example, this could be used on a golf club, tennis racket or other sports equipment to allow you to practice your swing. With a mixed reality headset or display, you could hit a virtual ball, or analyse your swing and technique to improve your form. A great prototype example of this type of interaction is shown in this Table Tennis demo by Leap Motion (now UltraLeap).

Another application is music education. The popular video game Guitar Hero uses a guitar style controller for players to play along to songs, although the simplification of the instrument (while accessible), means that these skills don’t translate to actually being able to play the instrument.

The Tracker would allow a real instrument to become a virtual interface. This allows music data (e.g. a MIDI file), to be overlaid on the fretboard or finger positions, instead of sheet music or tablature which is not as easily digested by new players.

How it Works

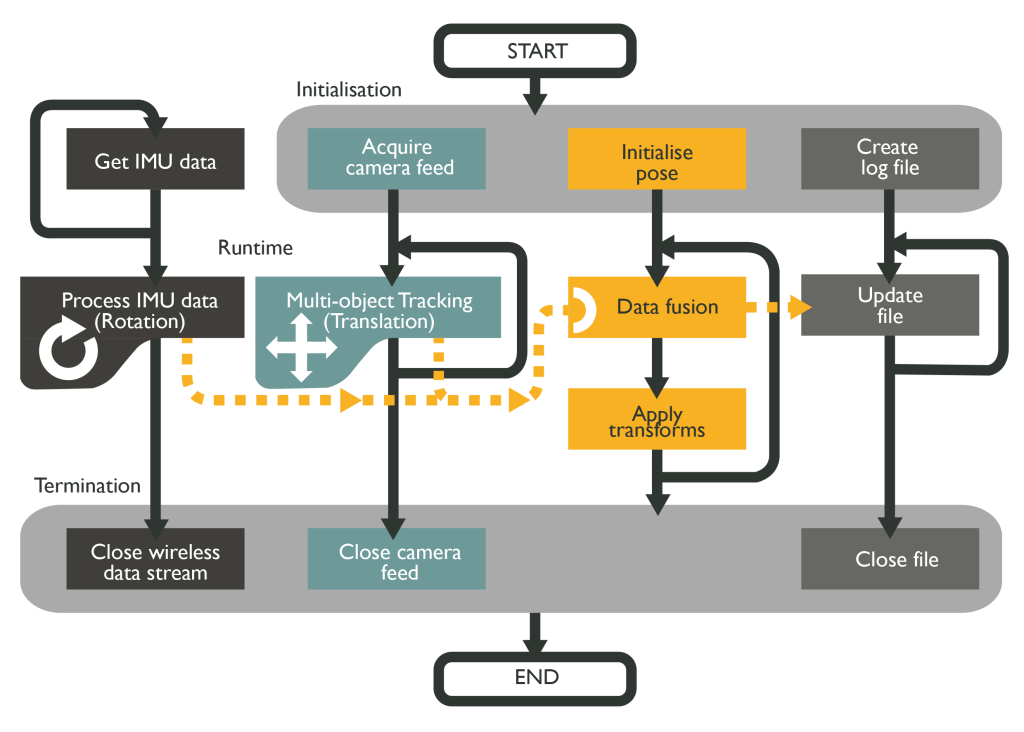

The tracking system was designed to be compatible with “Inside-Out” tracking systems. This is where sensors on the headset are used to run SLAM algorithms, allowing for precise anchored placement of virtual objects, without the need for externally positioned base stations/sensors. As a result, we chose to build a Infrared LED constellation system, combining the result of computer vision with an inertial measurement unit (IMU) feeding statistical data fusion in real-time. This method is similar to how VR controllers are tracked relative to stand alone headsets.

Challenges

Commercially, we Invoke struggled to gain traction for two reasons:

- Development kits were difficult to sell at the time (2018), as there were very few potential customers with mixed reality devices. The emerging devices (Magic Leap One, Microsoft Hololens) were much more limited than we had anticipated, particularly when it came to field of View (FOV). Small FOV’s made the virtual elements of our tracked objects underwhelming, even if the tracking itself was performing well.

- These platforms were not open-source, and developers often had very restricted to access to the camera streams (essential to our tracking method). This makes it very difficult for third parties to create such peripherals. An exception is the Triad HDK system compatible with Steam VR. While this delivers good tracking performance, it is an “outside-in” tracking system, so defeats the portable use case we had in mind for the tracker.

Technically, a challenge we discovered was minimising the motion-to-photon latency. Minimising this latency is important, but not absolutely critical to VR-only systems. The brain can accomodate some delay without breaking immersion or making the user feel unbalanced/nauseous. However, with a waveguide display on an AR headset (Such as the Magic Leap or Microsoft Hololens), even latencies <20ms are much more noticeable. If you rotate your head side to side while looking at a virtual object on a table for example, you can notice it oscillates in space trying to catch up to your realtime view. This is where pass-though displays such as that on the Meta Quest 3 and Apple Vision Pro are stronger solutions. The pass-though displays not only allow for wider FOV, crisper colour and shadows, they also sync the motion-to-photon latency of the feed of the real world with the movement of virtual objects, so they feel much more anchored in the space.

Are there Similar Products out there?

Now the Vive Ultimate Tracker is available, which executes its own inside-out SLAM using inertial and optical sensors. These are relatively expensive at the time of writing, and are primarily marketed for full body tracking in VR.

As these trackers are supposedly platform agnostic (do not require Steam VR base stations, can send tracking data to any device), I’m excited for the potential of the applications we envisioned for the Invoke Tracker to be realised on devices like the Apple Vision Pro or Meta Quest 3. In my view, trackers like these could be a great way to provide modular tracking of useful objects for pass-through mixed reality / spatial computing.

Portal

More about the Invoke Portal

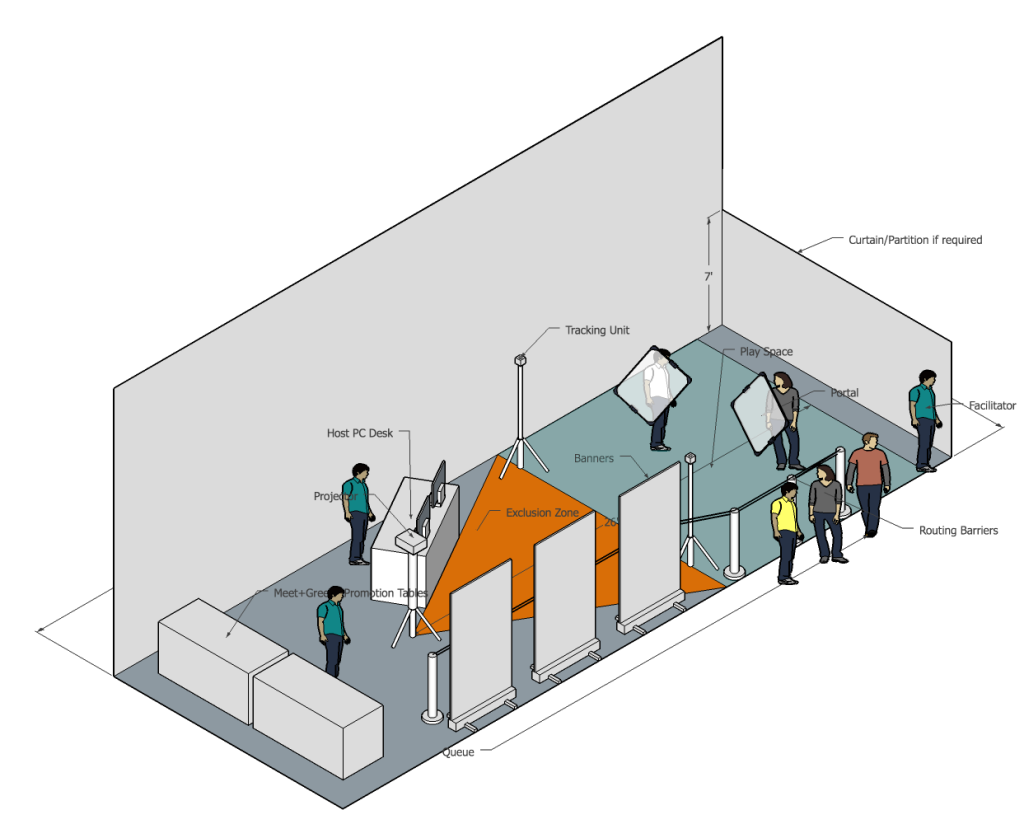

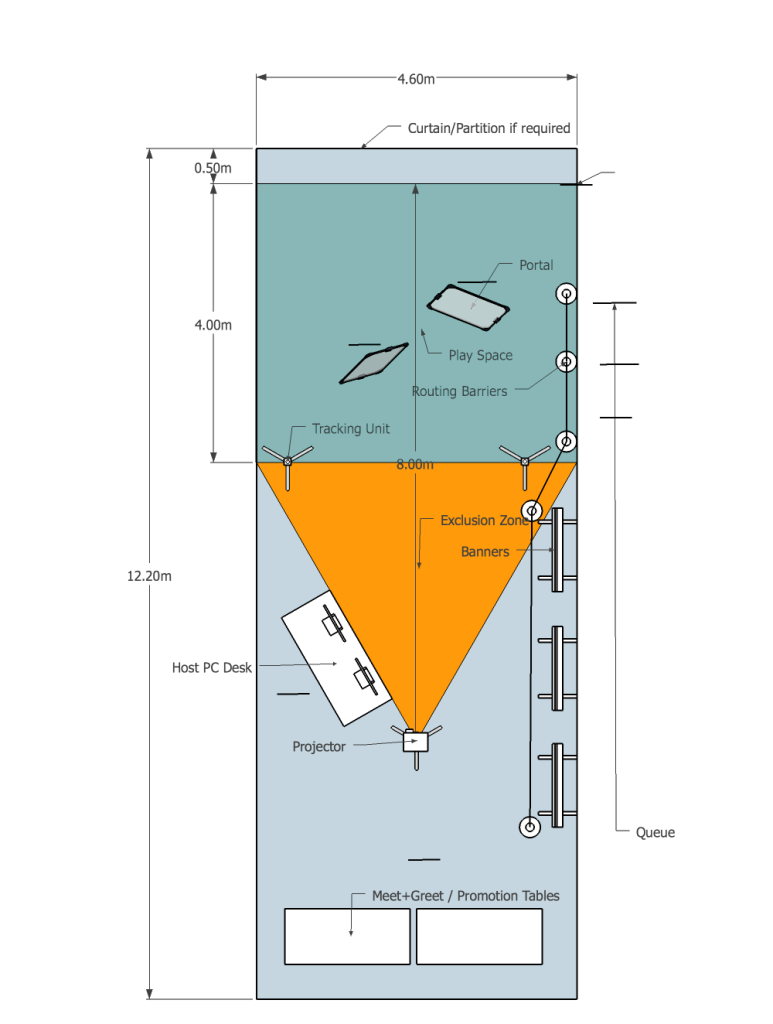

As Invoke was not making traction with the Tracker development, we pivoted towards a headset-less VR system we built called the Portal. The pickup and play nature of the canvases lent itself well for eye-catching activations, were users of all abilities and ages can jump into a fun experience for 5 minutes or so.

We made good progress on developing this system, and were set to run an initial product showcase as invitees to Augmented World Expo (AWE) in California in May 2020. Unfortunately, COVID-19 interrupted these plans and forced an end to Invoke’s operations. During the planned weekend of the expo, the Santa Clara convention centre was a converted to a temporary hospital for an overflow of COVID patients.

How it Works

The Portal canvases are tracked in realtime using Vive Trackers and the Steam VR tracking system. The canvases consist of a tensioned sheet of translucent film, held in a lightweight aluminium frame. Controllers on either side of the frame provided gripping points and allows the user to interact with the virtual environment in other ways than moving around spatially.

We built a calibration system to quickly map the position of a set of projectors to the Steam VR coordinates. This requires a facilitator to align the portal with a set of projected rays, and confirm when they match. From this data, a set of vector operation algorithms could determine the projector’s 6DOF position with a high degree of accuracy in a matter of minutes.

Once calibrated, the projectors emits a selective window of the virtual scene, This window view is generated as if it is a camera from the user’s head position. The image lands on the back side of the moving canvas (back-projection). This means as a user, you can look straight into the canvas as if it is a literal “portal” into the virtual space you can carry around with you to explore.

This form of VR is more social than headset based VR, as you can share the experience with others. Additionally, it is nicely scaleable with additional projectors to cover a wider space/more angles, and multiple Portals able to be used in the same play space at the same time.

Projection Mapping

More about Invoke’s Realtime Projection Mapping

This project was our first experimentation with projection mapping. It was inspired by other projection mapping showcases (e.g. commonly applied to a static set, or building), however we had the ambition to make the projections follow dynamic objects with the use of the Steam VR tracking technology.

“Box” is an outstanding production of a similar concept worth watching. Their system is also dynamic projection mapping, with the camera and projection surfaces running through an “on rails” routine. Our system aimed to allow for realtime interaction with users supplying varied input movements.

We created some cool demos on this system, with physics models adding to the immersion. Through user testing, we found the single viewing angle (from a set of play glasses that were actually a tracked virtual “camera” was quite limiting. The users would also get between the target object and projectors and not understand why they couldn’t see anything (they were casting a shadow over the object). These findings lead to the development of the Invoke Portal, which was a more successful concept in practice.